The Mission of STTorytime¶

An approach to managing human knowledge¶

If you want to know the deep thought behind an apparently ad hoc bit of software, please take a look at the project's own research website.

We tend to confuse knowledge with our desire for knowledge. In fact, you might say we covet other people's knowledge, but we don't always understand what that means. If somebody else knows the answer, that's `world knowledge', but it's not ours.

Do we need to know so much? There's, of course, an argument about not having to know everything ourselves. Then we need knowledge about how to make use of other people's knowledge. In the past, we've taken this for granted because it all sort of worked out when things were slower and there were fewer people and pressures on us all. What's new in the modern world is the pace of change, and the rate at which our old world knowledge expires. We need to update ourselves by deepening our relationships to what we want to know as the world accelerates. It's becoming stressful, and this places a focus on tools like databases and Artificial Intelligence to relieve that stress---but they are a devil's bargain, because they require us to trust unknowingly.

Knowledge management is not really about writing stuff down, filing it, or putting is in a database. Knowledge existed before writing was invented; animals know things without using the World Wide Web. Knowledge management is more like tending a garden. You discover, you plant, you have to tend to growth, you organize, and you have to pull weeds and fix damage yourself. Eventually, you learn your way around the garden, where to pick flowers and vegetables. The bigger the garden, the more effort it takes to reach this level of familiarity. So where are the knowledge tractors and harvesters?

The key thing is that---to acquire knowledge---you have to have the intent to do it all yourself, otherwise it's merely someone else's knowledge. It requires our attention! We can't pass that on to someone else.

Delegating and working together¶

So why not trust others? Why not just trust Artificial Intelligence and databases? The simple answer is that we need to know enough to judge whether what others tell us is reliable.

Because we can't know and do everything ourselves, we've learned to work together. Trusting others' knowledge and skill allows us to make use of it, without understanding all the details. But we do need to check their homework for errors and for negligence. As soon as we delegate, we become managers and inspectors if we want to know anything at all---otherwise, we've simply accepted ignorance.

When we delegate, we exchange our knowledge for trust in someone or something else; but trust is also about knowing! We exchange our knowing of facts or skills with the knowing about the accuracy of the person, book, or assistant (human or otherwise) that we're relying on.

Stories¶

When we're familar with `knowledge' (which is the actual definition of it), we can communicate it to others by telling stories about it to people, machines, abstract processes, work in general. By communicating it to a process we're engaged in, we make use of it. It could be how to operate a cash register, or drive a car, when and how much to water a crop. Basically, it's about having the familiarity with cause and effect to trust ourselves to act appropriately in the appropriate circumstance or context.

As a simple rule of thumb, if your notes don't form an obvious story, they are incomplete and you should seek to fill in the kind of elements that allow a story to be told. In particular, the palette of relations you might use to relate the parts into a whole. This is not a trivial matter, but it points to the missing pieces. When you confront these issues, you find yourself pursuing understanding.

A simple measure of knowledge is what kind of story we could tell. A knowledgable `story' is much more than idle gossip about unfamiliar hearsay: it has to make sense, it has to be grounded in intimate detail. Condensing knowledge into intentional stories (at different levels of detail for different purposes) helps us to pass on knowledge. Some stories are about solving problems, others are merely descriptive lessons learned.

Remembering and forgetting¶

Learning requires memory, and memory has a horizon. Things we learn, thoughts that we have, are easily lost and forgotten. Writing stuff down (intructions, experiences, etc) seems like a good idea, but it's useless if no one reads what is written regularly. It doesn't matter how you try to remember something. If you don't use the memory, revisit it frequently, it will decay to nothing. This is why most Wikis and documentation efforts fail. Writing notes for occasional use once a year, or only in case of emergency is pointless unless it's used and rehearsed often. Wikipedia succeeds on average for a population because it's many to many interaction, which keeps the pulse of interaction alive: someone is always looking at the information. But that doen't mean that you, an individual, will learn from what is written there. Even Wikipedia information is not knowledge unless it's in someone's head. To the causal browser, it is merely information, even hearsay. Knowledge for the population is different from knowledge for a single person. A library doesn't make you smart if you don't read the books. So engineering knowledge involves engineering habits and behaviour.

Tools make work easier?¶

We're talking about knowing how to acquire and retain knowledge. Technology can help, but it can also get us stuck doing the wrong thing. If we take away too much of the work from people, people will forget how to do the work. That's how knowledge works.

Semantic knowledge representations have not evolved since the Semantic Web was proposed during the 1990s, at a time when the technology was primitive and the technologists weren't themselves experienced in how to use it.

Recently, graph databases have seemed to offer new possibilities for knowledge representation, but the methods for using them have been poorly developed and require the use of specialized query languages and clumsy outdated formats. In this project, I'm shifting the focus away from technology to to content. We can use standard SQL databases and modern lightweight data formats so embrace familiarity. The aim is not to pickle a a static knowledge graph like an RDF structure, but rather to establish a context dependent switching network of thoughts that form stories. There's some overlap with what Large Language Models do, but they take away control from the user. Here we give it back.

A user workflow starts from a simple note-taking language, then by ingesting it into a database using a graph model based on the causal semantic spacetime model, to the use of a simple web application for supporting graph searches and data presentation. The aim is to make a generally useful library for incorporating into other applications, or running as a standalone notebook service.

To devise a knowledge management system, our aims are:

- To assist in overcoming human limitations, while respecting the reason for them.

- To devise a way of getting experiences and thoughts into a computer representation that will be used actively and immediately. For this, we shall devise a language N4L or Notes For Learning.

The structure of cognition lies in decoding input into a lasting invariant representation, which is linked to a rich set of very familiar contexts, with reactive output and post-associative feedback that expands the integration of what you've memorized.

The distinction between an input representation, recall, and ``information usability'' (the process of distilling something useful) is the basic problem to be tackled. It's the same process we face when attempting to learn a foreign language: out mental model is spontaneous, but forcing it through the bottleneck of language is hard, because we don't know all the words and we may never have tried to say what we want to say before. We would like to ensure that there is a sufficient reservoir of examples to draw on and use as templates.

We may try to `cram' words into memory by repetition, but in the heat of the moment we're unable to recall any of it. That's because memory lookup is contextual, and the context in which we experience it and the context in which we try to learn it are totally different---and we don't know how to connect the two.

Part of our difficulty in making notes and organizing is also our lack of an overview. It doesn't take very much information to fill a page and exceed our visual resolution. Even if we could organize everything on a single sheet, our ability to resolve and comprehend it is limited. So what we need is a way of integrating disjointed fragments of note-taking to create that integrated model.

Tidying up is a learning strategy¶

If you do it once, it just hides information. If you keep doing to improve and fiddle with the organization of things, you become intimately and cognitively familiar with the placement and usefulness of things. This is what our brains do. There has to be re-use activity, involving motor functions to extract things from their tidy places.

Memory strategy and organization¶

When we tidy, often we create subject boxes first. It's by putting something in the right box that we believe we understand it. For example, when writing this, I write a number of section titles and try to collect everything related to it under each heading..

Reorganizing notes post-hoc is very time consuming, because it requires multiple passes and many decisions. Unless we intentionally place fragments of experience into boxes initially and intentionally, we lose the economic benefits of experience. Intent thus serves an economic function for a cognitive agent.

But there is a problem with this. When we group things together, we made trade-offs and approximations that we might not agree with later. For instance, is a a duckbilled platypus a mammal or a bird. It's a warm blooded species that lays eggs. It flouts the boundaries of the largest boxes in biology by belonging to two boxes or a box all by itself. When we rely on box logic, things always go wrong in the end. This is the trouble with ontologies as we use them in technology today. The main benefit of doing this is that it forces us to revisit the model over and over again and make connections. We then identify knowledge with those "aha!" moments at which we independently had insights. Those are the moments we remember and can recall what we learned.

Our philosophical affectations have led us to go too far, however, when we form entire world ontologies and try to fit everything into a single common spanning tree of knowledge. This is an abuse of process, because ontologies are only outgrowths of coordinate systems for indexing {\em contexts}. They belong to a separate sensory language that represents experience, not the concepts derived from cumulative processing and metaphoric expansion.

The temptation to continue classifying and sub-classifying by inventing ad hoc discriminators is a powerful tendency that occasionally runs riot and appeals to the bureaucratic mind. Even though applying discriminators from general experience ad hoc seems like a smart approach, it easily leads to tunnel vision.

SSTorytelling¶

We pass on knowledge by telling stories or building narratives. Some of these are rooted in facts, some are metaphors and plain fiction and we use them in different ways in different contexts to pass on facts and hypothetical lessons.

N4L, a note taking language¶

We want a simple free text format for entering data, without specialized encodings, for the first phase of jotting down items of information that we want to learn and know. This language must support Unicode for multi-language support. Memory data may come in a variety of media formats: text, images, audio etc. When we are searching, however, symbolic language is a convenient interaction format. So, in a first instance, we can use pattern matching transducers to convert multimedia formats into ``alt texts'' and categorize those in a knowledge reasoning structure. This may not strictly be necessary in the long run, but it's a useful place to begin: in particular we need the ability to identify sub-parts of an image of audio segment in order to cross-reference it.

The basic syntactic format of the contextual note taking language takes the following form:

The SST Graph¶

N4L results in a graph representation, called the Semantic Spacetime (SST) graph.

If you've heard about Knowledge Graphs such as those using the Resource Description Framework (RDF)

and its related Web Ontology Language (OWL) then you'll know something about the idea already.

SST Graphs are not RDF or OWL, indeed they reject those early principles as a flawed concept.

Still, the differences between the two are subtle.

SST graphs impose a different kind of discipline on knowledge representations than RDF/OWL:

- SST graphs do not have XML schemas, nor are there formal ontologies.

- SST graphs use implicit typing based on relation (link) names.

- Graph nodes are focal points for any kind of data, though some text is usual.

- Graph links between nodes, i.e. graph relations, are unidirectional and may have any name. However, te names must be classified into one of four possible meta-types that describe spacetime semantics:

-

- SIMILARITY - a degree of equivalence

-

- LEADS TO - a causally ordered relationship

-

- CONTAINS - is one thing a part of another?

-

- PROPERTY - a descriptive orexpressive property of a node

These classes make it easier and more meaningful to search the graph later, because their meanings are aligned with the processes of searching. The main problem with ontology and RDF is that they encourage you to model the world as a number of things of different types, rather than modelling what processes those things are involved in, i.e. the things we are interested in. If we make data searchable by design, we avoid gettting into trouble later.

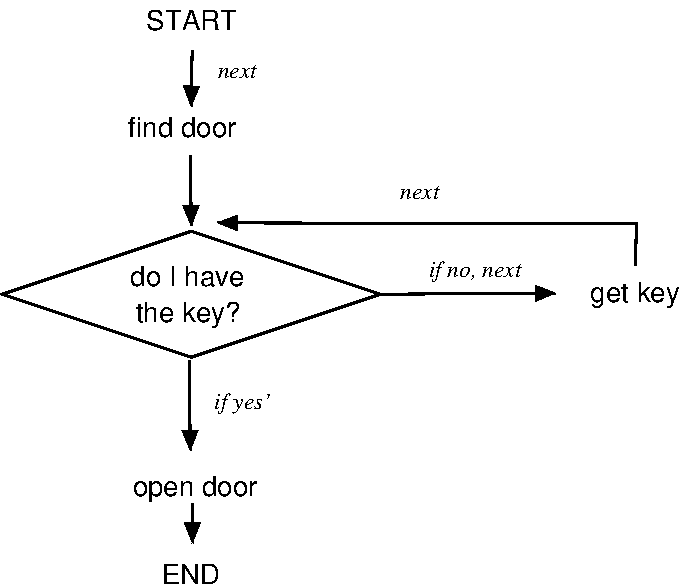

A flow chart example¶

Everyone knows about flow charts. These can be rather trivial, or very complicated. They are the basis for finite state machines (FSM), as well as error and risk graphs too. In N4L, we might write:

- flow chart @question Do I have the key? Start (next) Find Door (next) $question.1 :: yes :: # conditionals are basically context $question.1 (next if yes) Open Door (next) End :: no :: $question.1 (next if no) Get key (next) $question.1

The picture looks like this:

In this case, we defined the arrows in the SSTconfig/* files.

- leadsto

# Define arrow causal directions ... left to right

+ is followed by (next) - is preceded by (prev)

+ then the next is (then) - previous (prior)

// Flow charts / FSMs etc

+ next if yes (ifyes) - is a positive outcome of (bifyes)

+ next if no (if no) - is anegitive outcome of (bifno)

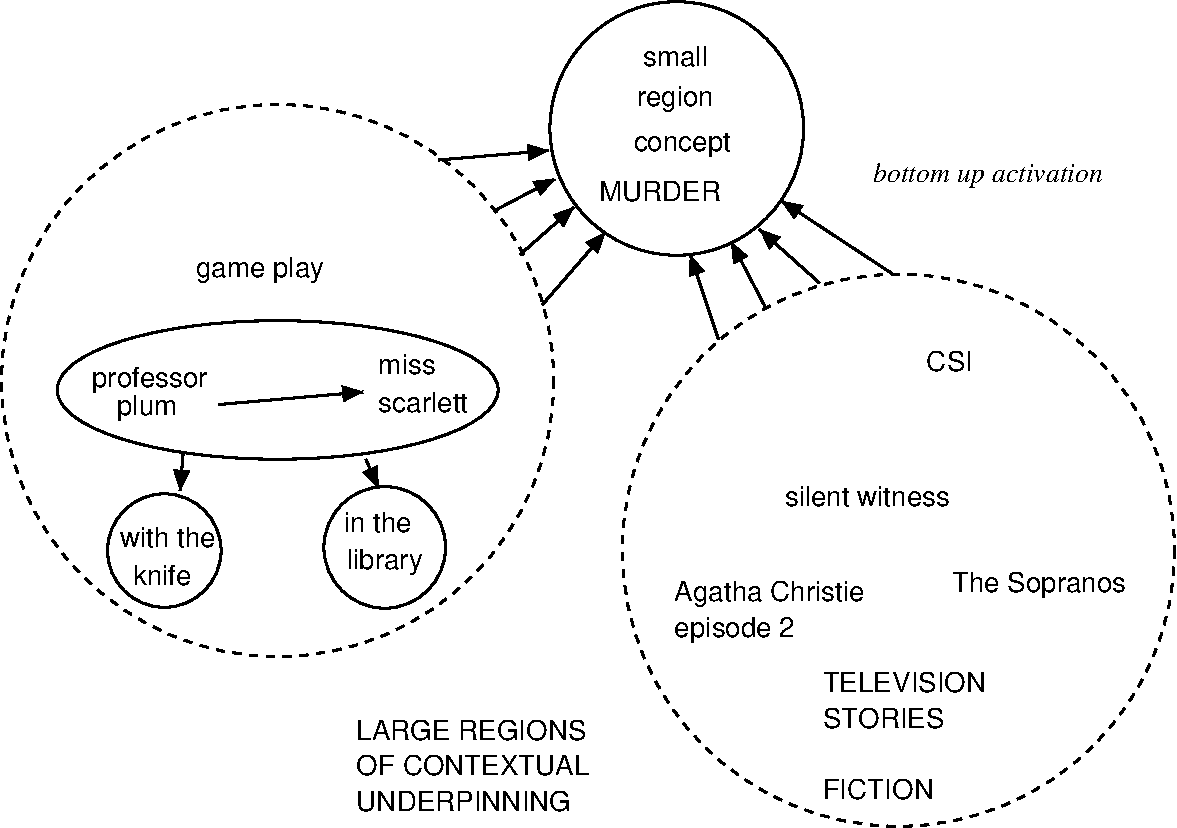

Dial M for Murder¶

Associations of clues and bits of information form forensic trails that are ideally suited to graph representations. You can imagine a crime solving team entering all their evidence into a graph and searching it for possible connections using inferences along the way. This is more powerful than simply applying logical rules to an ontology, because logic can never tell you any more than you explicitly stated in the beginning. Using `fuzzy' inferences, on the other hand, we can perform lateral reasoning just like humans do. The goal is to be able to tell a plausible story about something.

The details of the graph below are not yet defined, but you can imagine that they lead to an organization of thought something like the picture below.

Data entry is generally on a low level, item by item, but certain similarities lead to groupings of things that are related only by inference. This is what we mean by scaling of the graph.

We enter data from the bottom-up, but we usually want to think about it and search it conceptually in a top-down way. That's a conundrum for logics, because logics do not have a natural notion of scale. Focusing on logical relationships can easily obscure set information. The SST principles help us to do that.

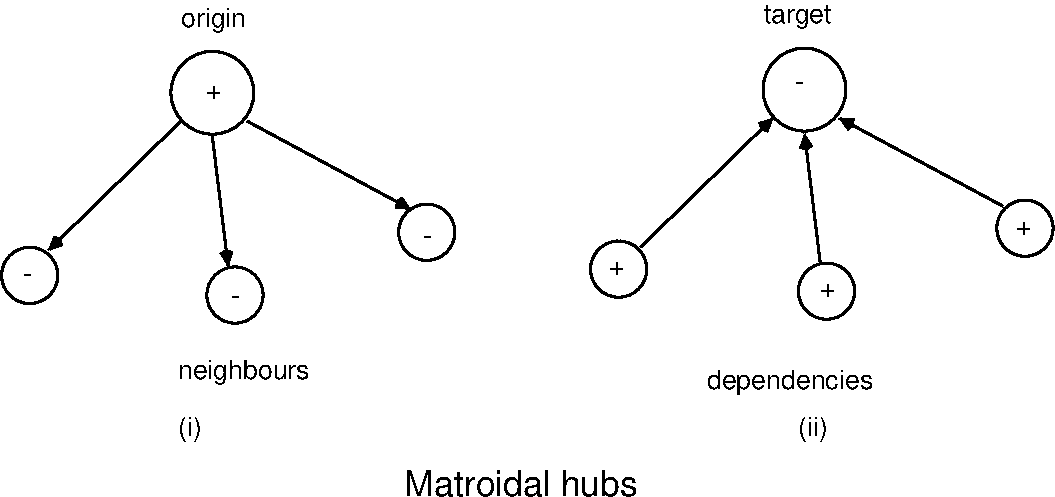

Common structures in a graph¶

The most important structure in any directed graph is a hub. In Promise Theory, these imply so-called appointed roles to the nodes. If several arrows of the same type point to a node, then the node absorbs the property and it becomes a "target" or shared property of the links. Conversely, if a node emits many arrows of the same type, it is a kind of distributor of that property. In other words, these structures imply many-to-one groupings, in which the "one" matroidal node represents the group. Groups of the four meta-types each have different meanings.

In a directed graph there are two major type of matroid for incoming and outgoing nodes.

We can use these structures to good effect when walking through the graph and analysing it. For example, if we see a hub that connected a number of things together, it acts as a kind of group leader for those things. If the arrow is of type contains, then it's clearly the name of the container--and we have the name on the box the items belong to. On the other hand, if all arrows point to a certain item and the arrow is "depends on" (inverse leadsto), then we know that single node is a critical dependency, perhaps even a Single Point of Failure for the items! These are examples of how even sem--formal semantics provide simple insights.